How to Build LLM Agents for Automated Digital Solutions

Learn how to build LLM agents that automate digital solutions, schedule workflows, and integrate any tool via multi-channel communication for total automation.

Compare DeepSeek V4 and GPT-5.5 on benchmarks, pricing, and use cases. Find which AI model fits your workflow in 2026.

DeepSeek V4 vs GPT-5.5 — choosing the right AI model in 2026 comes down to your budget and your need for autonomous agent performance. DeepSeek V4 delivers massive cost savings, open-source flexibility, and a 1-million-token context window. GPT-5.5 offers superior agentic capabilities, enterprise-grade reliability, and the highest benchmark scores in software engineering. DeepSeek V4 Pro costs just $1.74 per million input tokens compared to GPT-5.5’s $5.00, while GPT-5.5 scores 88.7% on SWE-bench Verified versus DeepSeek V4’s 80.6%. This head-to-head comparison breaks down benchmarks, pricing, strengths, and use cases so you can pick the right model for your workflow.

With BeFreed’s AI-powered book summaries and podcasts, you can quickly get up to speed on the AI concepts behind these frontier models — from transformer architectures to prompt engineering — in just 10 to 40 minutes per topic.

| Feature | DeepSeek V4 Pro | DeepSeek V4 Flash | GPT-5.5 | GPT-5.5 Pro |

|---|---|---|---|---|

| Release Date | April 24, 2026 | April 24, 2026 | April 23, 2026 | April 24, 2026 |

| Model Type | Open-source (MoE) | Open-source (MoE) | Closed-source | Closed-source |

| Total Parameters | 1.6 trillion | 284 billion | Not disclosed | Not disclosed |

| Active Parameters | 49 billion per token | 13 billion per token | Not disclosed | Not disclosed |

| Context Window | 1,000,000 tokens | 1,000,000 tokens | 256,000 tokens | 256,000 tokens |

| Input Price (per 1M tokens) | $1.74 | $0.14 | $5.00 | $30.00 |

| Output Price (per 1M tokens) | $3.48 | $0.28 | $30.00 | $80.00 |

| SWE-bench Verified | 80.6% | Not available | 88.7% | Not listed separately |

| Open Weights | Yes, on Hugging Face | Yes, on Hugging Face | No | No |

| API Compatibility | OpenAI + Anthropic format | OpenAI + Anthropic format | OpenAI format | OpenAI format |

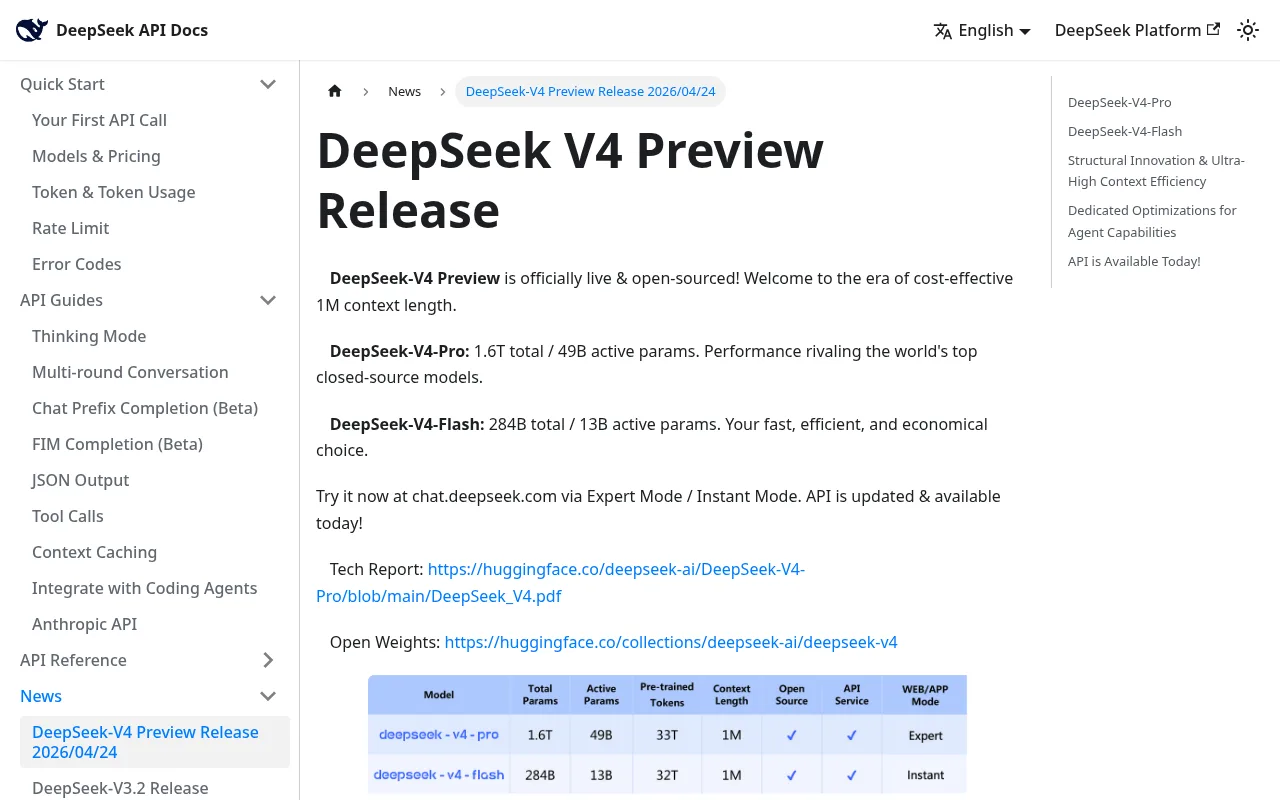

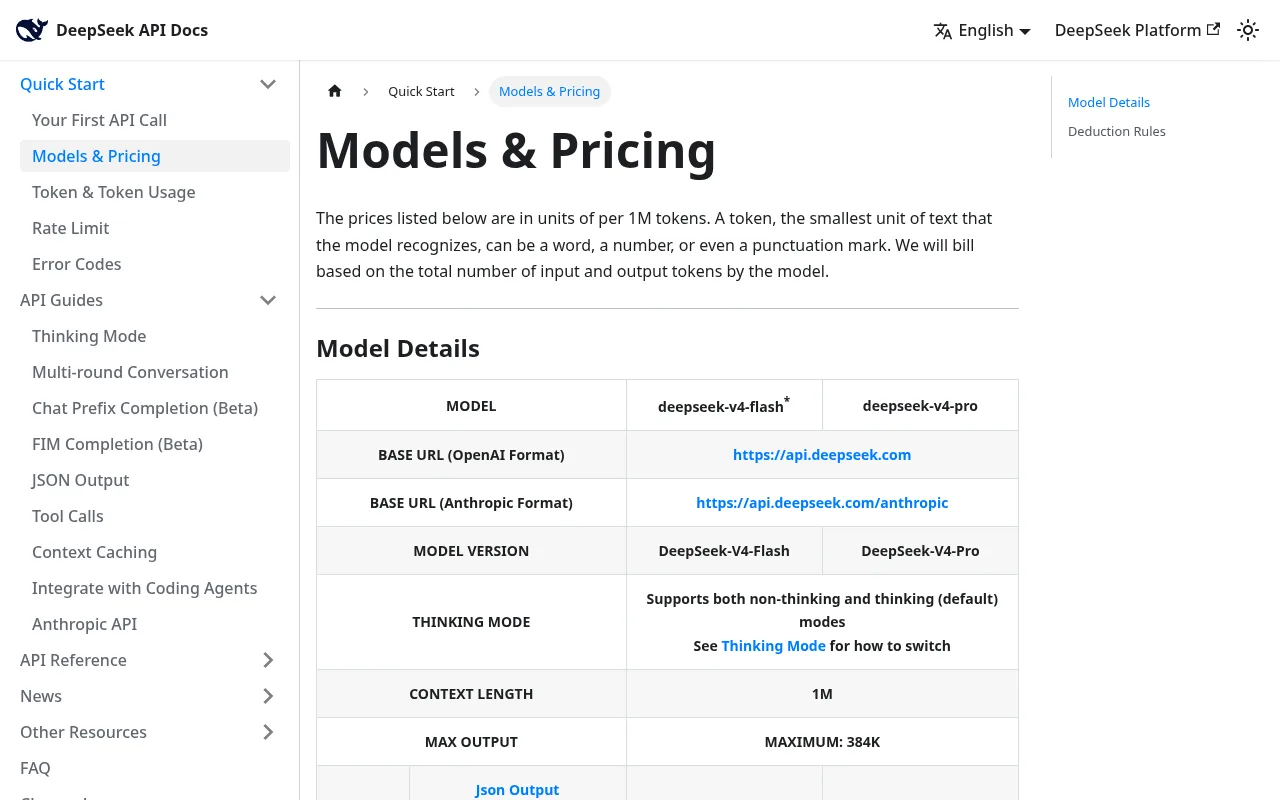

DeepSeek V4 is an open-source AI model family released on April 24, 2026, built for cost-efficient, large-scale reasoning and coding tasks. It launched as a preview with two tiers: V4 Pro and V4 Flash.

The architecture uses a Mixture of Experts (MoE) design. V4 Pro packs 1.6 trillion total parameters but only activates 49 billion per token, keeping inference fast and affordable. V4 Flash is even leaner at 284 billion total parameters with 13 billion active. Both models support a native 1-million-token context window — not a bolt-on feature, but designed from the ground up.

DeepSeek V4 also introduces major efficiency gains. According to the official release, the model reduces compute to 27% FLOPs and 10% KV cache compared to V3.2, thanks to a hybrid attention system combining Compressed Sparse Attention and Heavily Compressed Attention. FP4 storage for expert weights halves memory requirements versus prior versions.

Both V4 models are fully open-source on Hugging Face. Developers can download weights, run them locally, fine-tune them, and access the API at platform.deepseek.com. The API supports both OpenAI ChatCompletions and Anthropic API formats.

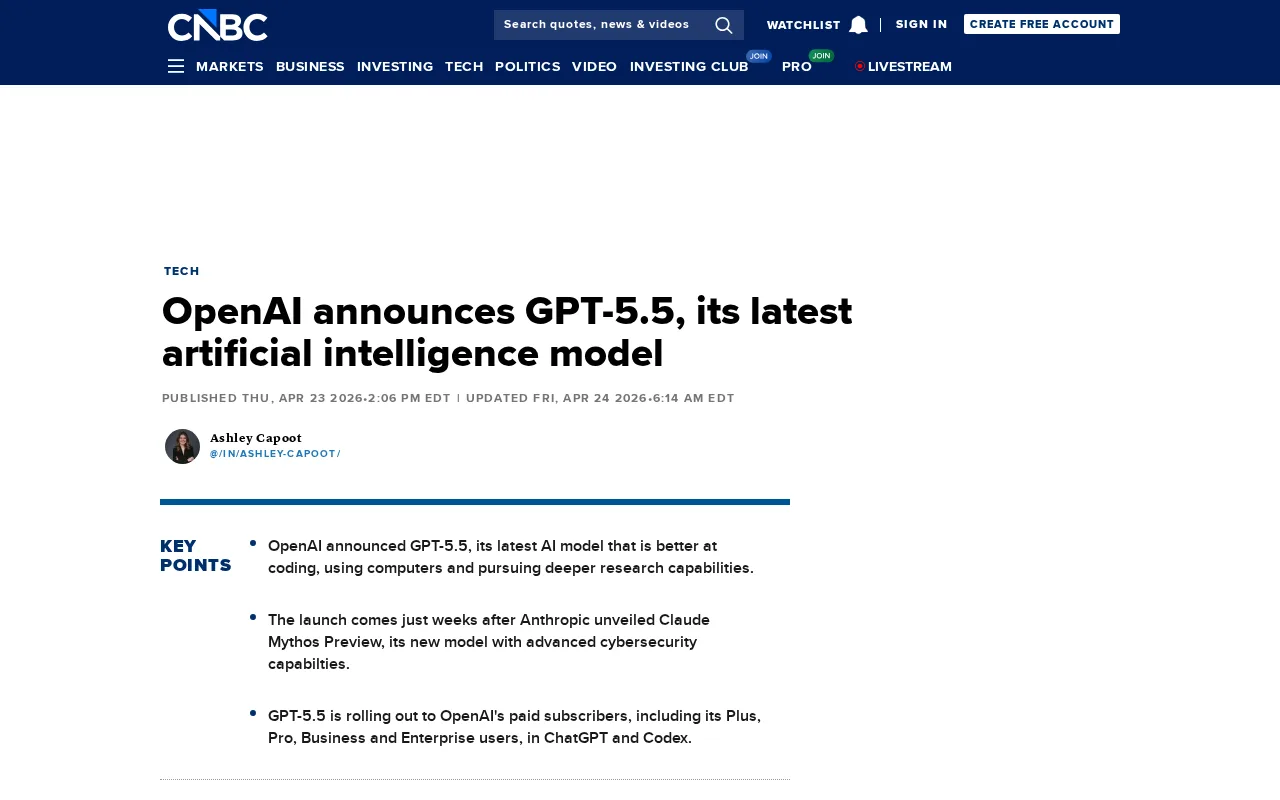

GPT-5.5 is OpenAI’s flagship model released on April 23, 2026, designed for advanced reasoning, autonomous agent workflows, and enterprise reliability. OpenAI describes it as their “smartest and most intuitive to use model yet.”

The model excels at writing and debugging code, researching online, analyzing data, creating documents, operating software, and moving across tools until a task is finished. The gains are especially strong in agentic coding, computer use, and knowledge work.

A major reliability improvement sets GPT-5.5 apart: OpenAI reports a 60% hallucination reduction compared to GPT-5.4. This makes the model significantly safer for enterprise deployments where factual accuracy is non-negotiable. GPT-5.5 matches GPT-5.4’s per-token latency in real-world serving while performing at a higher intelligence level.

GPT-5.5 features a 256K context window. While smaller than DeepSeek V4’s 1M offering, it is highly optimized for complex instruction following and agentic behavior. The model ships in two tiers: standard GPT-5.5 and GPT-5.5 Pro, available to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex.

If you want to understand how these models execute complex, multi-step tasks, listen to Building AI agents that actually do the work — it covers practical workflows for using autonomous AI agents.

Learn how to build LLM agents that automate digital solutions, schedule workflows, and integrate any tool via multi-channel communication for total automation.

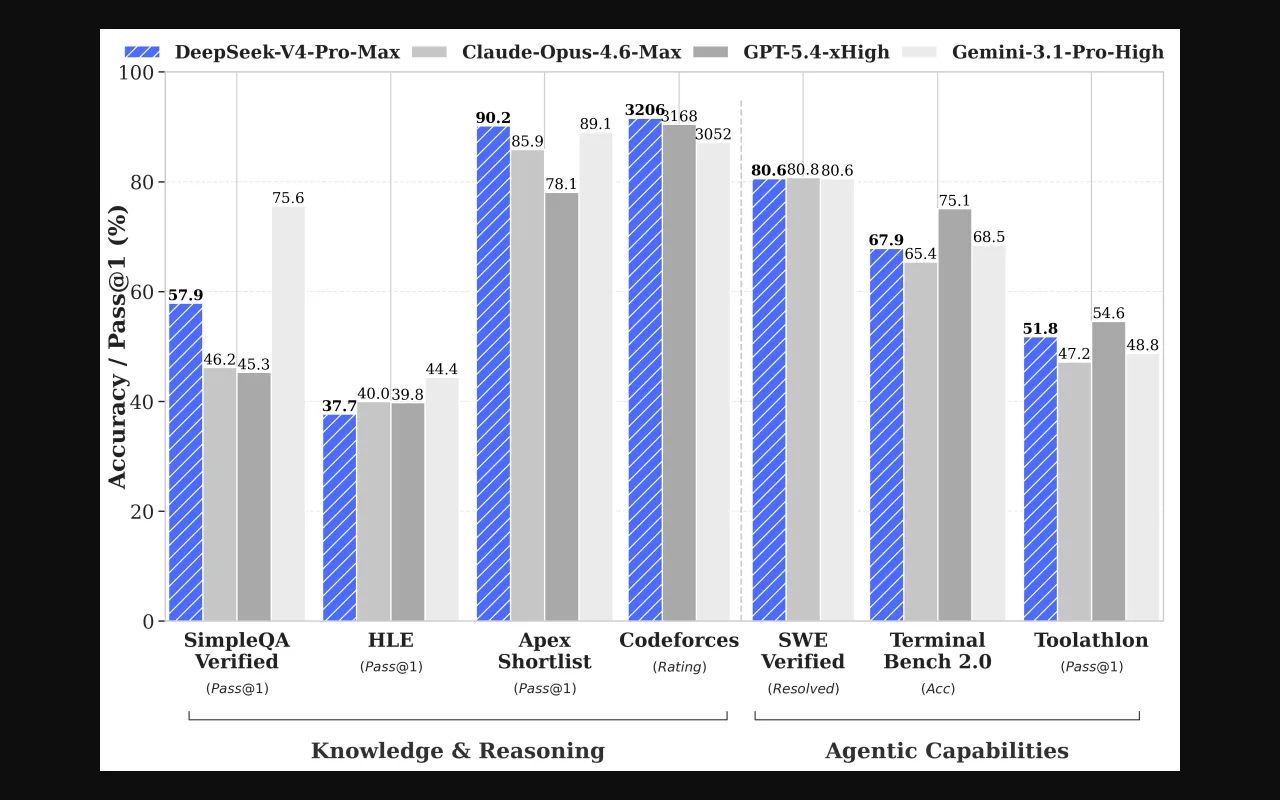

GPT-5.5 outperforms DeepSeek V4 on enterprise-focused benchmarks like SWE-bench and Terminal-Bench, while DeepSeek V4 posts excellent scores in competitive programming and reasoning tasks.

| Benchmark | DeepSeek V4 Pro | GPT-5.5 | What It Measures |

|---|---|---|---|

| SWE-bench Verified | 80.6% | 88.7% | Real GitHub issue resolution |

| Terminal-Bench 2.0 | 67.9% | 82.7% | Command-line and system operations |

| MMLU / MMLU-Pro | 87.5% (MMLU-Pro) | 92.4% (MMLU) | General world knowledge |

| GPQA Diamond | 90.1% | Not published | Graduate-level science reasoning |

| LiveCodeBench | 93.5% | Not published | Competitive programming |

| Codeforces Rating | 3,206 | Not published | Competitive programming ranking |

| SWE-bench Pro | 55.4% | 58.6% | Advanced software engineering |

GPT-5.5 shows a clear advantage in real-world software engineering. On SWE-bench Verified, it scores 88.7% — an 8-point lead over DeepSeek V4’s 80.6%. The gap widens on Terminal-Bench 2.0, which tests command-line interaction and multi-step system tasks. GPT-5.5 scores 82.7% versus DeepSeek V4’s 67.9%, highlighting its stronger agentic capabilities.

DeepSeek V4 fights back on competitive programming and pure reasoning. It achieves 93.5% on LiveCodeBench and holds a Codeforces rating of 3,206 — ranking 23rd among human competitors. On GPQA Diamond, a graduate-level science reasoning benchmark, DeepSeek V4 scores an impressive 90.1%.

On general knowledge (MMLU), GPT-5.5 leads with 92.4% versus DeepSeek V4’s 87.5% on the harder MMLU-Pro variant. Note that these are slightly different benchmark versions, so direct comparison requires caution.

DeepSeek V4 costs a fraction of GPT-5.5. The pricing gap is the single biggest factor for teams choosing between these models.

| Model Tier | Input (per 1M tokens) | Output (per 1M tokens) | Cache Hit Input (per 1M) |

|---|---|---|---|

| DeepSeek V4 Flash | $0.14 | $0.28 | $0.028 |

| DeepSeek V4 Pro | $1.74 | $3.48 | $0.145 |

| GPT-5.5 | $5.00 | $30.00 | Not published |

| GPT-5.5 Pro | $30.00 | $80.00 | Not published |

Scenario 1: Startup Chat Application (10M input + 2M output tokens/day)

| Model | Daily Cost | Monthly Cost (30 days) |

|---|---|---|

| DeepSeek V4 Pro | $24.36 | $730 |

| GPT-5.5 | $110.00 | $3,300 |

| GPT-5.5 Pro | $460.00 | $13,800 |

Scenario 2: Enterprise Document Processing (1M documents/month, 30K tokens each)

| Model | Monthly Cost |

|---|---|

| DeepSeek V4 Flash | ~$1,400 |

| DeepSeek V4 Pro | ~$24,000 |

| GPT-5.5 | ~$270,000 |

Scenario 3: Code Generation Pipeline (100K requests/month)

| Model | Monthly Cost |

|---|---|

| DeepSeek V4 Pro | ~$550 |

| GPT-5.5 | ~$8,000 |

The output pricing gap tells the story. GPT-5.5 charges $30 per million output tokens — roughly 8.6x more than DeepSeek V4 Pro’s $3.48. For high-volume applications, that difference compounds rapidly. DeepSeek V4 Flash at $0.28 per million output tokens is over 100x cheaper than GPT-5.5 on output.

For developers exploring how to run models locally and cut API costs entirely, listen to Build an LLM from scratch on your laptop for a practical walkthrough.

Building AI feels impossible without a supercomputer, but you only need eight building blocks. Learn how to train your own model in under ten minutes.

Your choice depends on whether budget or autonomous performance is your primary constraint. Here is a quick decision framework:

Choose DeepSeek V4 Pro if you need:

Choose DeepSeek V4 Flash if you need:

Choose GPT-5.5 if you need:

Choose GPT-5.5 Pro if you need:

As models grow more autonomous and capable, the bigger picture questions matter too. In Life 3.0, MIT physicist Max Tegmark explores humanity’s future with superintelligent AI — asking what happens when machines design their own hardware and software. It is a thought-provoking read for anyone building with frontier AI models.

The AI model race moves fast. Staying current on how transformer architectures, MoE designs, and agentic frameworks work gives you a real advantage when choosing and using these tools.

BeFreed helps you keep up with AI developments through concise book summaries and AI-generated podcast episodes. Melanie Mitchell’s Artificial Intelligence breaks down the gap between AI hype and reality — perfect context for evaluating benchmark claims. For hands-on prompt skills, the Prompt engineering for better AI reasoning episode covers practical techniques to get better outputs from any model.

A captivating exploration of AI's potential and limitations, demystifying the hype and addressing crucial questions about machine intelligence.

Master prompt engineering techniques with examples of zero-shot, few-shot, and chain-of-thought prompting. Learn when to use each for optimal AI performance.

Try BeFreed today and turn complex AI concepts into quick, personalized learning sessions — whether you prefer reading summaries or listening to AI-generated podcasts.

As of April 2026, DeepSeek V4 vs GPT-5.5 represents the fundamental trade-off in frontier AI: cost versus polish. DeepSeek V4 is the clear winner on pricing, open-source access, and context window size. It proves that frontier-level intelligence does not require massive API budgets. GPT-5.5 is the clear winner on enterprise reliability, autonomous agent workflows, and complex software engineering. Your decision comes down to a simple question: Is the performance gap worth the price premium for your specific use case? For most high-volume applications, DeepSeek V4 delivers outstanding value. For mission-critical enterprise deployments that demand minimal human oversight, GPT-5.5 justifies the investment.